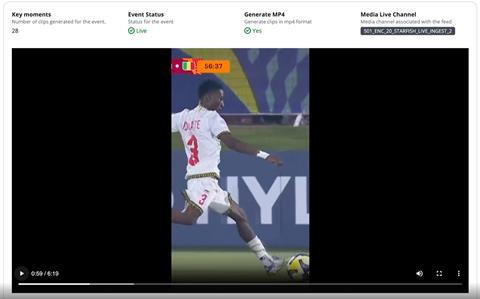

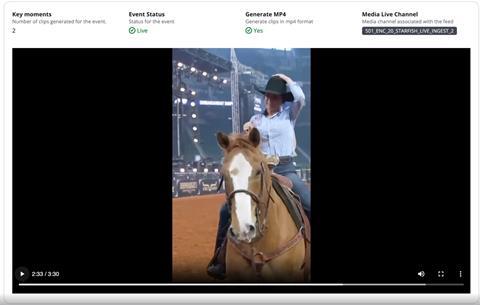

AWS Elemental Inference tracks subjects and keeps key action visible while converting horizontal to vertical video

AWS has launched AWS Elemental Inference, an AI service that automatically transforms horizontal video into vertical formats that are optimised for mobile and social platforms in real-time.

AWS Elemental Inference also automatically detects and extracts highlight clips from live content for real-time distribution.

More features and capabilities will also be introduced throughout this year.

When creating vertical videos, the service tracks subjects and keeps key action visible, maintaining broadcast quality while automatically reformatting content for mobile viewing, says AWS.

It enables broadcasters and streamers to reach audiences on platforms including TikTok, Instagram Reels, and YouTube Shorts without manual post-production work or AI expertise.

The majority of live broadcasts are produced in landscape format and converting these into vertical formats for mobile platforms typically requires time-consuming manual editing. This can result in broadcasters missing viral moments and losing audiences to mobile-first destinations.

AWS Elemental uses an agentic AI application – it analyses video in real-time and automatically applies the right optimisations at the right moments, says AWS. No human intervention is required.

AWS Elemental Inference works in parallel with live video, with a 6-10 second latency to create the vertical video, compared to minutes for traditional post-processing approaches.

AWS Elemental Inference is available now in US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai).

You can enable AWS Elemental Inference through the AWS Elemental MediaLive console or integrate it into your workflows using APIs.

There are no upfront costs or use commitments for accessing the service, instead you pay only for the features you use and the video you process.

No comments yet