Broadcast Sport hears from AWS and Genius Sports about what they think is next

From AI commentary at Wimbledon and skeletal tracking in the Championship, to digital humans at the Tour de France Femmes and watching Muhammad Ali in virtual reality, the tech behind sport broadcasting has been moving quickly in 2023.

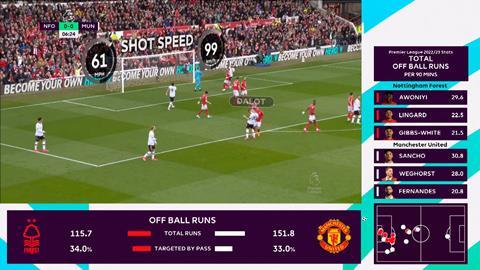

According to Genius Sports Group CTO Michael Patel, these advances could lead to more personalisation of broadcasts. He told Broadcast Sport, “We’re ultimately trying to personalise the experience so users can consume the broadcast in different ways. Right now, the experience that users interact with is very one size fits all.

“I believe that there’s a much more compelling fan engagement narrative if users can follow the players that they’re interested in, or highlight the statistics they want if they’re very heavy statistics guys, or they can learn about the game in more detail if they just don’t understand the game very well.

“There’s a huge range of sporting fans, from people who would just like to understand, for example in a basketball game, who’s who on the court, to others who would like to really understand the position and the pose of different players as they’re operating this field – for example, will somebody do a pick and roll?”

Patel doesn’t even rule out personalised commentary, “It’s certainly possible, right?”, and thinks it’s only a matter of time before people will have control over what they want to watch from a broadcast. “I think people recognise the possibility. It’ll take time to understand what’s possible. It’ll take time for the technology to kind of be consumer ready.

“I think there’s belief that there’s a real market and there’s real demand for that type of engagement. The easy elevator pitch is you’re sitting courtside, you can consume the game from any angle. You can just switch to a different angle if you want. That’s an empowering thing for fans to have to elevate how meaningfully they can engage with the sport.”

Head of developer relations, HPC engineering, at AWS, Brendan Bouffler, believes that the technology we have can even guide the sport itself, making it a more exciting prospect for audiences. AWS works with F1, and has already got experience tailoring a sport so it is more broadcast friendly, helping the racing series to allow for more wheel-to-wheel action.

“You get incredible amount of downforce, like several G’s of downforce [with F1 cars],” Bouffler explained. “That’s why they can do the really tight corners and the tight manoeuvring. But if they get the front of a car, or come too close to the back of the car in front there’s a massive amount of wake turbulence because all that air flows very smoothly over the front of the car. So if the cars get too close to each other, what happens is you lose the downforce and the car just flips.

“So the F1 organisation spent a lot of time simulating this so they could work out what would have to be different about car designs, what would have to be different about the rules in order to keep the cars apart or allow them to come closer. Their objective was to actually get all of the teams to redesign the front and back to their cars to reduce the amount of turbulence that might mess with downforce so that they could get closer wheel to wheel.

“The whole goal was to try and allow them to get really close to each other so that the drivers could see the whites of each other’s eyes without actually causing a flip. So and they figured it out and actually did the necessary rule change.”

The growth of AI could allow for this kind of innovation to happen more quickly: “Can we train models that allow the car designers, maybe the race rules designers, to do a simulation in a few seconds? To see what happens if we let those two cars get slightly closer at these angles? You know, it used to be that you might spend three weeks of computing getting there. Now? 15 seconds.”

Finally, when it comes to the technology actually being used in broadcasts, greater flexibility is Bouffler’s prediction, with cloud workflows, that are already used by some, allowing for easier changes. In the past, “you could see the workflow in the graphics department from the animation guys all the way down through to the stills guys through to the folks who looked after the video, everything. You could watch the workflow by basically following the coax cable down the hall. The workflow wasn’t a logical thing. It was a really quite a hard physical thing encoded in the building.

“You take some of the technology we’ve built, the supercomputers, the networking stuff, and you build it into the fabric of that food chain. Virtualise the entire thing, every single one of those elements. Now it’s just an EC2 instance streaming uncompressed video to the next one and to the next one. Suddenly on a Thursday night you can say, ’I don’t think our workflow is very efficient.’ Drag, drop, drag, drop, rewire it on a screen, and suddenly you’ve changed the workflow in your organisation.”

No comments yet